Professor Claudio Luchinat, University of Florence and co-founder of the Magnetic Resonance Center (CERM), speaks to News-Medical about his research in NMR-based metabolomics.

Please can you give me a brief overview of your research interests?

We started from theoretical inorganic to bioinorganic chemistry, so looking at metals in proteins, enzymes and so on. About 30% of all the proteins that we have are metalloproteins, so it’s a huge contribution that inorganic chemistry is providing for life.

NMR in Metabolomics

NMR in Metabolomics from AZoNetwork on Vimeo. Video Credit: Bruker BioSpin Group

We started looking at the structures of metalloproteins. We have experience with metals, so we are one of a handful of centers where this is done and, over decades, we have developed experience in metal ions in biological systems.

From that, we moved on to investigating more complex systems; intrinsically disordered proteins (IDPs) on one side and protein – protein interactions, up to the cellular level, on the other side. Since the NMR technique is very versatile, we decided about ten years ago, to move on to metabolomics as well.

This field has been growing worldwide, so we have made a lot of progress. Besides the fact that we enjoy doing this work, I think we also have dreams, because we see that there is an enormous potential for metabolomics and this potential is, according to us, largely unexplored.

What can be done to improve the study of metabolomics? How will this affect the applications of this field?

There is a lot to discover with relatively little effort, but we have to broaden the investigation to increase the number of subjects and the number of samples by orders of magnitude, otherwise we will not get anywhere.

If you do that, then the prices will go down further. Already now, it is not so expensive to run a single NMR experiment, so you can even dream of doing that on a population basis.

If that is successful, then you may start thinking of a fingerprint for each individual and, if you start at an early age, when people are usually healthy, then you can follow people over years and maybe detect when a disease is emerging.

We have examples where you can actually detect a disease early, where early detection is essential to save people. This is largely unexplored and not even so well understood by many people. You can do metabolomics in different ways; you can use NMR or mass spectrometry.

What are the major differences between mass spectrometry and NMR with metabolomics study? What advantages does NMR provide?

Mass spec is more powerful in the sense of the number of metabolites you can detect, because it’s more sensitive. However, quantitation is a little more cumbersome because you need to standardize the measurements and you need to have labeled reference compounds to run together with your compounds.

With NMR, on the other hand, you just take any sample, there is little preparation; buffering, filtering, centrifugation and then you get a fingerprint, which is absolutely reproducible, within around 1%.

It’s better than any analytical technique and it’s cheap because you can take a single spectrum and that spectrum is the fingerprint of the individual at the time you took it. At the time, the individual may or may not have developed various diseases.

If you have a database which is large enough, you can compare different fingerprints of different diseases and tell a person “You are okay, but you are moving towards this or that disease. Go and get a more specific test or check.”

Why would you choose one system over another?

If you look at the literature, something like more than 80% of metabolomics papers are by mass spec and less than 20% are by NMR. There’s nothing wrong with that; mass spec is more sensitive and you see more metabolites, but the quantitation is much more difficult.

It’s much less high throughput and less automated than NMR. In my view, mass spec is therefore kind of a second line. You do wide screening by NMR to get information and then you say: “This is interesting. Let’s see. I’ve identified these three or four metabolites that are altered by this disease.”

Then you want to see more and so turn to mass spec and ask a specific question. I want to see other metabolites in this pathway, some of which may be present in a very tiny amount. Then you can use mass spec, because it’s targeted analysis and you can actually aim at identifying more metabolites because that would reinforce your theory or hypothesis and so on.

NMR is for research and for learning more about the biology, as well as for diagnosis. It’s a single spectrum on a sample that has been collected properly once. You just need to centrifuge, add buffer and go.

It’s the simplest thing you can do and it’s faster. Despite the cost of the instrument, the cost for a single analysis, if you spread it over the lifetime of the instrument and the cost of the technician and so on, then it costs significantly less than mass spec.

NMR has the advantages of high throughput, the simplicity of handling the sample and the fact that one spectrum is enough. On the other hand, with mass spec, sometimes you need to spike with the same compound, containing a heavier isotope, in order to have the peak of the same compound but isotope-labeled next to the real peak, in order to quantify by comparing the intensities, because you need an internal comparison.

You cannot compare a spectrum taken yesterday from a spectrum taken today. It’s a kind of drawback, but, depending on what you need to do, it may be better, although it’s not a universal screening method, I think.

Maybe some colleagues in mass spec will say: “No, you are wrong, we are actually doing better.” However, my feeling and the feeling of the people who are doing both, is this: metabolomics by mass spec is something different. For instance, if you look at tissues, the amounts are not so large and you need to identify as many as possible. You need to have a working hypothesis too because if you want to look at hydrophobic compounds, you need to do the experiment differently than if you wanted to look at hydrophilic compounds.

So, there are several mas spec experiments and even several different machines. Some mass spec machines are better for one thing and some are better for another. With NMR, on the other hand, there is one instrument and it does everything. Of course, it does it with less sensitivity, but also with great reproducibility and ease of use.

Recently you founded Giotto Biotech, can you give me a brief overview of how it was set up? What your motivations were and what Giotto Biotech aims to achieve?

The potential of NMR for metabolomics is something that is really exciting. There are five hundred million people in Europe, which means five hundred million spectra per year,or maybe every two years. It’s many orders of magnitude greater than what you can do now, but it’s doable and if you do it, then it means a revolution in the health system.

The CERM/CIRMMP institution provided the initial means to start producing proteins. Giotto Biotech was aimed at producing proteins for research and we happened to know many colleagues in the world that do more or less what we do, we knew our customers already.

We started selling proteins, as well as selling the expertise for labeling proteins for NMR. That’s something that not many companies in the world do. If someone is not themselves an expert of proteins, but wants to do NMR on a protein, they need to buy a protein that will be labeled with stable isotopes in order for it to be studied by NMR.

Image Credit: Bruker BioSpin Group

We also sell services and this is the connection with metabolomics. People can come to Giotto Biotech if they want to run experiments and use the instruments.

Giotto Biotech often tests metabolites in urine, can you give a brief overview of the challenges and advantages of urine analysis using NMR?

One of the other big challenges is being able to collect urine at home and still get a reproducible analysis, with regards to how the samples are prepared and sent to a specialized center to have the samples handled in exactly the same way, which is difficult.

However, if that can be achieved, then you would open up population-wide screening, which is very cheap, mainly in savings to sample collection, imagine having people walking into the hospital and getting blood drained; it’s much easier if you can collect urine.

Many people are skeptical about urine because, being a waste product, urine is very variable, with the concentration of metabolites going up and down. However, this is also its richness, because if you can understand why this is going up and down, you can achieve a very accurate fingerprint of what is going on in the body.

Also, urine is amazingly stable. We published our first paper on the topic with Manfred Spraul and other colleagues of Bruker in 2006. Here, with our post-doc students, we got about thirty people to bring a urine sample every morning for around two months.

We collected an average of forty samples per person over a limited period and we ran the spectra, without even caring about identifying all the metabolites at that time; we were just using the spectra as fingerprints.

Image Credit: Bruker BioSpin Group

A spectrum is a fingerprint; it’s a collection of signals and it is very clear that each individual has his or her own fingerprint, despite the fact that there is huge variability from one day to the next. The variation occurs because, of course, you see different signals depending on what you eat, another signal when you chew gum, and another when you take paracetamol, for example.

Although there is a lot of variability, underneath there is a kind of stable ground, which is really individual. What is amazing is that we have repeated this with some of the same individuals over the years and we can still recognize each one with 97% accuracy, after ten years. Urine is as good as blood in terms of stability, although on the surface it seems much more variable.

It is variable to some extent, but there is still a lot which is really pertinent to a single individual. What is nice about it being so stable, is that although it doesn’t tell you much about what’s going on because it is insensitive to unimportant things like getting a cold, for example, if you get something serious, then it shows up.

We have cases where we find we cannot recognize the individual anymore and then we discover that they had a strong bacterial infection, they took a lot of antibiotics, killed all their intestinal flora and, of course, the profile was changed.

However, after two months they are back to where they were and again we recognize them with 97% accuracy. It’s the best that you can hope for, because it is insensitive to tiny things, but sensitive to important things, which has implications for diagnosis of disease.

Can you give me some examples of clinical applications of this research?

I can give you a couple of enlightening examples. After surgery for breast cancer, many women fortunately recover, but some relapse. They may develop metastasis or another tumor and so on. In one study that we performed with an oncologist, we took blood samples from women who had breast cancer before surgery, so before their tumor was removed.

These women were followed for nine years. We found that, just by looking at a single fingerprint of blood, we could predict which women would have metastasis and which ones would not, with an accuracy of about 85%. That is truly amazing.

This is very important because if you were able to predict the risk that metastasis would develop, you could decide on the most appropriate therapy. You could decide whether hormone therapy or radiotherapy is needed or whether heavy chemotherapy is needed. There is nothing magical about it; it’s simply that people are individual and have their own fingerprint.

You’re not detecting metabolites that come from the tumor, which is sometimes only 3 mm in size and a tiny thing. How can you possibly detect signs of this tumor in the blood? It’s not the tumor, it’s the reaction of the individual’s immune response to the presence of this hostile object that is amplifying the signal. What I think you’re actually detecting is the response of the individual to the tiny tumor.

The response may be different whether this tumor is already spreading or not, so it’s there, even if you are unable to detect metastasis because the tumors are too small. I think this is truly amazing and this is just one example.

The main problem with urine is that signals are never in the same place because urine is a complex fluid and at variance with blood, it’s not regulated by any homeostasis or self-regulation of the body because urine is waste, so the body doesn’t care.

Urine is always different and the composition is, by and large, the same. It is estimated that there are about two thousand metabolites in urine, some of which are abundant. Using NMR, you can identify maybe a 100 or 150 metabolites. Using mass spec, you can do even more, but it’s more cumbersome.

Because the signals are never in the same place, it’s very difficult to recognize each of them automatically. You have to look at the spectra one by one and ask “in this case, maybe this signal comes from this metabolite, or does it come from another one?” It is this difficulty in interpreting the spectra that is really preventing urine being used as one of the main bodily fluids.

What is being done to improve this analysis?

As a chemist, I thought that if signals move, there must be a reason and that the reason is a chemical reason. Signals move because, depending on the composition of urine, there are interactions among metabolites that make them appear in slightly different places in the spectra.

Therefore, we started modeling these interactions among all possible metabolites. Not two thousand, but maybe one hundred. We started seeing patterns and found that we could predict where that metabolite will be in that particular sample

This opens the way for really automated assignment. It’s something that we have discussed a lot with Bruker over the last year and, finally, we made a small agreement with Giotto. We have a post-doc in Giotto who is actually dedicated to this and I’m really confident that, within less than a year, we will have a very powerful way to interpret urine.

One of the benefits of this is that it is absolutely non-invasive, you produce urine anyway as compared with blood, which you need to draw from a person.

If you were to follow a healthy person and you saw a change, would you be able to tell what the change is and what the possible disease is?

This is exactly the dream we have. For the moment, we have a fingerprint of breast cancer and if I get a sample, I can predict whether a woman will have a relapse or not, with 85% probability.

From that exact same fingerprint, with the same spectra from the same sample, I can predict another disease, provided that I have done the same work for that disease. Another example that is very meaningful concerns a form of heart failure called idiopathic heart failure, which does not arise as a consequence of something that you had such as a previous infarction, coronary artery disease or high blood pressure for decades.

Idiopathic heart failure is a kind of heart failure that you are somehow born with. It’s like a defect of the heart. The heart doesn’t work exactly as it should, but you are actually compensating for that. Interestingly, the Italian word for heart failure is … “uncompensated heart,” which gives the idea that you may have this disease for decades without really knowing because you are compensating, for instance, by accelerating the heartbeat.

Although this may not be the case and you are actually just born with a faster heartbeat, it may also be that you’re actually compensating for a defect and if you don’t detect it early enough, at some point you’ll develop heart failure. By the time you get the symptoms such as panting after climbing stairs, then it may be too late. And there is no cure for heart failure.

However, if you detect it very early on, you can intervene with pills that lower the heartbeat and lower the blood pressure. You can keep the heart under control in such a way that it’s not fatigued and then the person can have a normal lifespan. However, if you detect it when it has already advanced, there is no cure and it declines until you die from it.

It would obviously be very interesting to get a fingerprint of heart failure, so we looked for that. We looked at people who had heart failure of different degrees. According to the New York Heart Association, there are four classes, ranging from very mild to very severe.

We also collected controls; people who maybe had other kinds of cardiovascular problems, but not heart failure. We could detect a big difference between controls and people with heart failure, but what was very surprising, was that usually when you see a fingerprint of a disease, the fingerprint becomes more and more evident with the progression of the disease.

This was not the case for heart failure. We had a fingerprint of heart failure that was equally strong for people with mild heart failure and for people with severe heart failure. There was no progressive increase in the fingerprint, so we started wondering if what we were seeing was not the fingerprint of the disease, but a fingerprint of what was there before the disease, maybe something that was there since childhood that the person was not aware of.

What you’re detecting is the fingerprint of the organism that’s trying to compensate for this defect. That fingerprint is the same. Since we already had a collection of about a thousand blood samples from other totally unrelated studies, we looked at them and we found something like twenty individuals who had, according to our fingerprint, the fingerprint of heart failure.

Of course, these twenty people were difficult to reach because they were part of other studies and they were not followed any longer, but our colleagues in the hospital managed to get hold of eleven out of these twenty people.

Those eleven were asked if they would like to take a free check of their heart using echocardiography, which is the main way to detect heart failure. They took an echocardiography and six of the eleven people had an echocardiography that was typical of heart failure and echocardiography is a real diagnostic measure.

It’s a small number and not something you can call statistically significant, but six out of eleven is a large proportion. Twenty out of a thousand is about right, which means if you take a thousand people in the street, there is a chance of establishing that about twenty of them have heart failure they are unaware of.

This applies to metabolomics in general; we do not aim at finding a way of detecting disease that is better than anything that it is around, because there are many specific tests. We have the fingerprint of severe heart disease, but there are very specific tests for severe heart disease, so why should we have another one?

Also, if a doctor suspects heart failure and sends you for an echocardiography, you find out anyway, so why do you need another way? The answer is because this is a single spectrum. With the single spectrum, we now have the fingerprint of relapse of breast cancer, of heart failure (a very early one) and in principle of many other severe diseases.

Suppose you had carried this out on a much larger, population-wide scale and you had a fingerprint, not of three, four, five or six diseases, but of many diseases. Of course, you would start with the most common, but you could go down and down to less common diseases. Then, with a single spectrum, you can compare a fingerprint of heart failure with one of no heart failure; you can compare a fingerprint of breast cancer with one of no breast cancer. However, if you compare with a fingerprint of something and you say “well, this could be this,” then you can direct a person to a specific test. It’s therefore a kind of pre-screening that is very cheap. It only involves one screen and yet it’s good for all diseases.

Out of the diseases that we have examined, I think in five out of six cases, we have a good fingerprint. It’s remarkable.

This depends on our database, it grows the more cases you examine, the better the database is and the more predictive the single spectrum would be that you took in the next one, two or three years. It’s something that will feed itself.

Is that something that, in the future, with this database, you could then start to screen newborns or children?

Yes, starting from newborns and I think Bruker has good experience with newborns. Manfred Spraul has published very good data on newborns, so starting from newborns and then moving on to children and adolescents is possible.

How could this affect the global population? What effect would screening everyone have on the global healthcare system?

It’s universal, because it’s one spectrum. In principle, you can refer this person to many different tests, specifically. It’s something that we should not talk so much about to agencies that fund research; we should talk to the health system, because a huge amount of money is spent every year on health in Europe and in any developed country, as well as in the US now.

ObamaCare is increasing the expenditure, which, personally, I think is good. It’s a huge amount of money, it’s orders of magnitude more than what you spend on research. So, if you dedicate 0.1% of this budget to specific research on metabolomics, you could save lots of money in the future, because anytime you can prevent a disease rather than cure a disease, it’s a big saving.

In Italy, the health system is organized on a regional basis. Each region has its own organization, which is good and bad in the sense that it’s less efficient, but it’s closer to the cities, so you can actually try to adapt the health system to monitor the diseases that are more prevalent in the region and so on.

The region of Tuscany is doing reasonably well, it’s one of the best in Italy. So, I tried to talk to them and said let’s do a pilot study in Tuscany, a million or two million people is doable, even just with our instruments here.

They said it would be nice, but that they don’t have money. I know they don’t have it. They never have enough money, but if you think of the future, it would be a tiny increase in the budget for something that can potentially provide a big saving ten years from now.

How much would it take for you to be able to test all the people in Tuscany?

There are about four million people. With one instrument, you can run at most a hundred thousand spectra per year. So, you would need twenty instruments to screen everyone every two years. But it’s doable, twenty instruments is twenty million euros.

You would also need technicians to run the instruments full time, 24 hours a day, but it’s not a big investment if you think about it. If you do it for five, ten years, for four million people every two years, it’s nothing compared to what the region of Tuscany spends on health.

Politicians have to justify what they do: “Okay, I spent 0.1% more this year and I have to justify it. I don’t have enough budget and maybe some people will not get adequate care because I’m spending money on a project that may be good in ten years.”

It’s very difficult to make long term plans under the present organization in western democracy, where politicians are judged every other day for their immediate success or failure, rather than just at the end of their mandate. Anyway, our western democracy is still the best we can do!

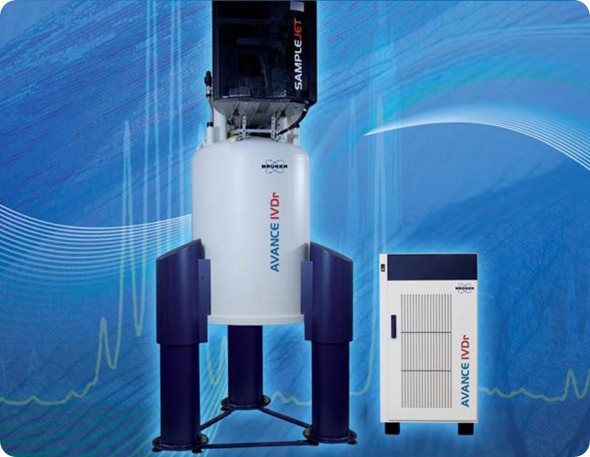

Which particular NMR machine do you use at Giotto Biotech for metabolomics in solution and why? What other equipment do you use?

We use the standard 600 MHz for IVD by NMR and we make sure that we keep absolutely in line with Bruker’s recommendations because we believe that if we’re not standardized with a particular instrument in the first place, we can never achieve what we would like to achieve in terms of screening broad numbers of people and so on. I think that’s an absolute must.

Image Credit: Bruker BioSpin Group

Bruker recommends a 600, a choice dictated by a good balance between price and performance. It’s good for routine work in metabolomics. Of course, occasionally we also use higher field instruments.

If we detect particular signals that we’re not able to actually identify and say what chemical it is, then we can move to higher fields and do more experiments, but 99% of the work for metabolomics is done on the standard 600 MHz.

We use a five millimeter probe; the one that is recommended for metabolomics and is completely standardized. We also have an automated sample changer, the sample jet, which maintains the samples at 4˚C. Then, they are preheated when they are inserted so that you don’t waste time trying to equilibrate the same temperature of the sample inside. This is amazing, because in the previous setting, most of the time was spent waiting for samples to reach the right temperature.

Temperature control is very important. You need to be sure that samples are all taken at exactly the same temperature, by which I mean to the plus or minus 0.1 degrees. To achieve that, you need to keep the sample there for minutes and it’s sometimes more than the time you need to take the spectra. Since they are biological samples, it’s better to keep them cold and then preheat them just before running, while the previous one is running. It’s kind of obvious, but it’s a great improvement.

How has your NMR technique been influenced by the standardized techniques from Bruker?

As an NMR spectroscopist, many of my colleagues and I like to develop our own sequences and we like to improve things here and there. We made the decision that we should not do that, we should perform metabolomics as it is recommended by Bruker.

Although you think that you could do a little better, which is always possible, if you and others in the many labs across the world started doing that, then everybody would be doing something different, it would be the end of metabolomics really.

You need to make sure that everybody runs the spectra in exactly the same way. Is that best or close to the best? Well, even if it is not the best, who cares? It’s being done in the same way and what is really crucial for metabolomics is standardization.

I think we are already advanced in standardizing the NMR procedures, thanks to Bruker’s work over the last ten years or so. On the other hand, standardization of samples is very difficult. If you take blood, you need to make sure that wherever it’s taken in the world it’s treated in the same way, using the same technique.

Obtaining serum in the same way is not so difficult because that is a standardized procedure already, but some people let it stay at room temperature for ten minutes, some half an hour and some for an hour or two, and this changes a lot.

Potentially, it can change the sample more than the effect of a disease does. For example, we were involved in a study about colorectal cancer, investigating whether a treatment may be better or worse. The patients were all terminally ill with metastatic cancer, based at three different hospitals in a northern European country.

Each hospital provided a group of controls and a group of patients. We took the spectra and performed normal statistical analysis to see whether there were patterns. We found three different groups. However, the three different groups were the three hospitals rather than the different groups being the patients and controls. The largest difference among the samples was the origin.

We found that there were signals reflecting that the samples were not prepared in exactly the same way. Once we identified those signals, we got rid of them. It took a lot of statistical analysis, but then the difference between healthy people and diseased people came up.

How would you like to see metabolomics research standardized in the future? What is currently being done to standardize the field?

You need to have samples which are handled in exactly the same way, otherwise, you see more difference in the way they are handled than what you are looking for. That’s one of the reasons why some people think that metabolomics is not progressing so much, which is true, but there are better and very simple ways to do that. You just need to follow a standard operating procedure.

Actually, Paola Turano from our lab was involved in a European project about the standardization of samples for all omics including genomics, proteomics and metabolomics. In the end, we are providing standard operating procedures that are actually being incorporated in the recommendations of the European label of the CEN, which is this standardization office in Berlin.

They will probably become standard within a year, which I think is great because at least you don’t have to explain why people should do things in a certain way. If we have these recommendations written, then people should follow them, so that things are made easier.

What advances have you made to the metabolomics field in recent years?

The innovation in metabolomics should be in the analysis of the data and it’s a lot of bioinformatics and statistics. We have developed our own one program for statistical analysis, which is great.

It was published in the PNAS a few years ago and is about clustering data when you know nothing about the data, referred to as “unsupervised.” You don’t know anything; you have a bunch of data on, for example, the three hospitals I mentioned; you do an unsupervised analysis and find three hospitals, but no difference between patients and controls.

This is totally unsupervised and it tells you whether there are elements that can group different spectra together and differentiate them from another group. This is called ‘clusterization’, cluster analysis.

There are many programs that do that, but for metabolomics, none of them works very well, while this one works beautifully. We are trying to push for that tool, and I think it should be incorporated into Bruker’s platform as an option for users.

What advances to the Bruker technology have there been in recent years? How has this affected the results?

There have been technological advances, probably not striking advances, but as I said before, in this particular field, what is important is not achieving great novelty; it’s important that the instrument is behaving in a predictable way. Predictable means reproducible to better than 1% intensity of signals and Bruker has made progress in the last few years in making these instruments absolutely reliable.

One choice that Bruker made two or three years ago or so, was to not use the CryoProbe for metabolomics. We had been using the 600 MHz spectrometer with the CryoProbe. At some point, Manfred said Bruker had decided that as a company policy, the 600 MHz spectrometer for metabolomics should not have the Cryo.

The advantage of removing the Cryo is that it was introducing a little bit of variability of the intensity of the signals and by removing it, the reproducibility becomes even better than before, although it was already very good. The disadvantage is that you lose sensitivity, so it’s a trade-off.

However, there is another element, which is that the instrument becomes cheaper and if you want to spread metabolomics, you need to offer something that is not terribly expensive. You suffer from losing sensitivity a little bit, although you could perhaps increase the number of scans and the time it takes to do an analysis. However, you gain improved flexibility and a cheaper instrument. I think, in the end, it was the right choice by Bruker.

Do you think that the technological advances for metabolomics would be in smaller systems or better probes?

Better probes, always. The progress with the probes and even with the CryoProbe has been very significant. One of the reasons why I think Bruker has made a good choice in removing the CryoProbe, is it’s cost and the fact that the probe that we have now which is not as sensitive as the Cryo, is actually already much more sensitive than the equivalent probe of ten years ago. If the probe technology improves, even without Cryo, you can get a good sensitivity. That is important.

Why would you prefer to see advances in the probes rather than smaller systems?

There is an intrinsic limit to the ability to detect a large number of metabolites and an NMR spectrum is very crowded. You have many different signals from many different metabolites that are overlapping with one another.

So, even if the sensitivity is higher, you don’t have the resolution and even if you go to 1 or 1.2 gigahertz, you will probably never have enough resolution to resolve all the things, it’s an intrinsic limitation.

It’s not a matter of if there is enough of a signal in a reasonable amount, to detect it. Sometimes, you cannot detect it because it’s underneath the signals of other metabolites that are much more abundant.

Actually, this is one of the reasons why we like to speak about fingerprints rather than panels of metabolites that you can identify each time. Of course, you can identify metabolites and quantify them, yes. However, if from an NMR spectrum, you quantify fifty metabolites or a hundred metabolites, which is a very good number, there is more in the spectrum than you are able to detect and quantify exactly, but it’s there underneath.

So, if you use the spectrum as such, in the fingerprint, there is also some small contribution from metabolites that you have not identified that you will never be able to quantify, even though they are there.

Therefore, when it comes to distinguishing a group of people from another group of people and saying: “This group has a disease and this one has not, and I can tell you from the fingerprint,” the fingerprint is more accurate than any panel of metabolites that you can extract from the fingerprint and quantify, because you are never extracting 100% of the information.

You may extract 80% or 85%, but the other 15% is hidden and if you don’t do fingerprinting, you’ll throw it away. Comparing fingerprints is the easiest thing. Again, if you think about diagnosis, it’s not about learning more about the biochemistry of the disease but, if you aim at diagnosis, rather than saying: “I want to find a marker, or maybe two markers, three markers, a panel of markers, ten markers,” well a fingerprint is all markers, all at once, so why discard it?

Keep the fingerprint and use it as a marker. Not all people who do metabolomics by NMR may agree with what I’ve said, but many do. I think it’s one of the ways in which metabolomics by NMR can actually become a routine way to screen a population, because it’s just a fingerprint. If the samples are prepared correctly and if the spectra are taken correctly, then fingerprints are comparable and you learn from that.

Where can readers find more information?

https://www.cerm.unifi.it/about-us/people/claudio-luchinat

About Prof. Claudio Luchinat

Claudio Luchinat is professor of General and Inorganic Chemistry at the Department of Chemistry "Ugo Schiff" of the University of Florence and is associated to the Center Magnetic Resonance (CERM) of which he is co-founder. He is also Co-founder, Director and President of the Inter-University Consortium for Magnetic Resonance of Metallo Proteins (CIRMMP), Co-founder and President of the Board Directors of the non-profit Foundation Fiorgen and Co-founder and member of the Scientific Advisory Board of the spinoff Giotto Biotech srl.

Among other offices, Claudio Luchinat is a member of the Supervisory Board of the Paramagnetic NMR Facility of the Leiden University, of the Scientific Committee of the French High Field NMR Research Infrastructure (EN-RMN-THC), of the External Advisory Board of the SEA DRUG Biomolecular NMR Facility of the University of Patras and an associate member of ICCOM-CNR.

His research interests include: bioinorganic chemistry, structural biology, development of structural methods based on NMR in solution and solid state, metalloproteins and metalloenzymes, theory of electron and nuclear relaxation, NMR of paramagnetic species, relaxometry, contrast agents, NMR-based analytical methods.

Since 2008, his research has been increasingly addressed to metabolomics, seeking to get the metabolic profiles of biological fluids such as urine and blood using NMR spectroscopy; define procedures for the preparation of the samples and for the acquisition of NMR spectra; develop statistical methods for data analysis; and correlate the metabolic profiles with pathophysiological characteristics of the subjects studied. Claudio Luchinat has become one of the international reference points for metabolomics, and is invited to give plenary lectures on the subject at conferences both in the specific field and of other fields.

He is the author of over 560 publications, in English, on internationally renowned scientific journals, his h-index is 71, and his works have been cited more than 20,000 times (google scholar). He has been invited to give seminars in numerous prestigious universities and research institutes around the world, and plenary lectures at international seminars, symposia and conferences.

Coordinator or principal investigator of major European research projects has also been the organizer of numerous international scientific conferences.

About Bruker BioSpin Group

The Bruker BioSpin Group designs, manufactures, and distributes advanced scientific instruments based on magnetic resonance and preclinical imaging technologies. These include our industry-leading NMR and EPR spectrometers, as well as imaging systems utilizing MRI, PET, SPECT, CT, Optical and MPI modalities. The Group also offers integrated software solutions and automation tools to support digital transformation across research and quality control environments.

Bruker BioSpin’s customers in academic, government, industrial, and pharmaceutical sectors rely on these technologies to gain detailed insights into molecular structure, dynamics, and interactions. Our solutions play a key role in structural biology, drug discovery, disease research, metabolomics, and advanced materials analysis. Recent investments in lab automation, optical imaging, and contract research services further strengthen our ability to support evolving customer needs and enable scientific innovation.