Since 2017, Olympus Corporation has participated in a joint research program that has the potential to help streamline the workload of clinical pathologists, called “A New Approach to Develop Computer-Aided Diagnosis Using Artificial Intelligence (AI) for Gastric Biopsy Specimens” with Dr. Kiyomi Taniyama, President of the Kure Medical Center and Chugoku Cancer Center. This research paired Dr. Taniyama’s knowledge and experience of pathology diagnosis of the gastric system and digital pathology, with Olympus’ imaging system technology and proficiency in AI development. Olympus, with its leading market share1 in microscopes, has continued to develop a CAD solution using AI for pathology diagnosis based on its proprietary deep learning technology.

Olympus used gastric biopsy specimens collected for diagnosis at the Kure Medical Center and Chugoku Cancer Center between 2015 and 2018 to develop deep learning technology, which consisted of a multi-resolutional convolutional neural network2 (CNN). The deep learning technology’s unique CNN was developed by Olympus and is designed to analyze the features of pathology sample images. Using the CNN, the deep learning technology was used to identify the area of ADC tissues on images. Based on the result, images were classified into adenocarcinoma (ADC) and non-adenocarcinoma (NADC). The research involved two stages: the learning stage where the AI learns the CNN model using digital pathology images and associated information, and the prediction stage where the AI classifies ADC images and NADC images using the model that it learned.

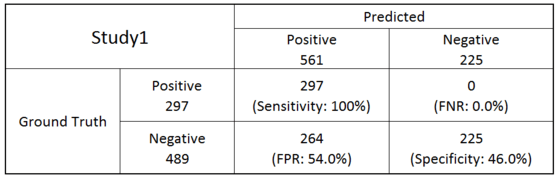

The AI algorithm was developed using 368 whole slide pathology images for learning, and 786 sample images (297 of ADC and 489 of NADC) for the classification threshold tuning (Table 1).

Table 1: When the threshold was set to assess all 297 ADC samples as positive (100% sensitivity), 225 out of the 489 NADC samples were assessed as negative. This represents 100% sensitivity (297 out of 297) and 46% specificity (225 out of 489).

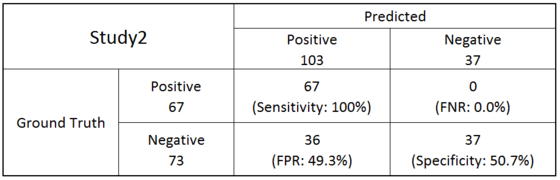

The classification threshold determined to detect all ADC achieved 46% specificity with NADCs. With 140 new cases (67 of ADC and 73 of NADC), this algorithm and the threshold achieved 100% sensitivity and 50.7% specificity as a final evaluation (Table 2).

Table 2: A final evaluation was made on 140 new sample images (67 samples of ADC and 73 samples of NADC). As a result, all 67 ADC samples were assessed as positive and 37 out of the 73 NADC samples were assessed as negative. This represents 100% sensitivity (67 out of 67) and 50.7% specificity (37 out of 73).

CAD software using AI with a low false negative rate6 can help pathologists detect positive samples. This software has the potential to eliminate the duplication of efforts in the workload of pathologists and further improve the accuracy of pathology diagnosis of gastric biopsies (of which, over four million tests are performed annually in Japan) by screening negative samples and helping prevent positive samples from being overlooked.

To learn more about Olympus (AI) efforts in the Life Sciences area, please visit: Olympus-Global.com/news/2018/nr00869.html.