Currently, clinicians rely on expensive advanced imaging to diagnose rotator cuff (RC) tears, which are highly prevalent in older adults.

Background

Across joints with high degrees of freedom (hDOF), the shoulder joint displays a vast range of motion (ROM). It occupies a high dimensional anatomic space with six degrees of freedom, and 18 different muscles control its articulation.

Muscles that stabilize the shoulder joint, known as the RC, are frequently injured during movement. In fact, of all musculoskeletal (MSK) injuries, the shoulder joint injury is the most common. With advancing age, symptomatic RC tears and chronic pain gradually worsen, which leads to a range of motion loss, poor neuromuscular control of the shoulder, and physical disability.

Previously, researchers used rodent models of tendon injury and repair to infer upper or lower extremity function using quadruped gait tasks. These methods demonstrated functional differences between varied RC tendon injury or repair strategies but not the bimanual forelimb movements, analogous to human motion patterns.

Furthermore, in studies with human subjects, researchers used expensive marker- or sensor-based techniques to examine shoulder function.

Overall, there remains a need for inexpensive tools to remotely track joint health and evaluate recovery from MSK injuries for underserved communities, including the elderly, patients in rural areas, and groups historically facing the highest demographic and socioeconomic barriers to getting in-person care.

About the study

In the present study, researchers used a murine model of RC injury to develop a machine learning (ML) pipeline for quantifying motion quality related to shoulder function. The study model recapitulated all histopathological features of human RC tears.

Remarkably, they also used a new preclinical model of shoulder function built upon the string-pulling task, where mice rope in a string as a sailor pulls cables on a ship.

Interestingly, performance on this task works similarly across all animals. Hence, the ML pipeline developed in this study on rodents automatically becomes applicable for assessing shoulder health in humans.

The string-pulling assay also has several advantages over quadruped gait tasks, the most important of which is that it is translatable to bipedal humans.

Further advantages include allowing the kinematic assessment of each arm independently and enabling within-animal control using the contralateral extremity. The assay can also distinguish lower extremity movements from arm movements and includes an overhead motion component frequently impaired in patients with RC tears.

For study experiments, the researchers first trained 12 adult male wild-type mice on a string-pulling task in a plexiglass behavior box for at least two weeks, conducted three times every week.

In preoperative baseline behavioral recording, each mouse pulled a 0.75 m long string for two trials. They then split the test animals into two surgical groups, where one group of mice had their supraspinatus (SS) and infraspinatus (IS) tendons transected and denervated, and the other (n=6) had their torn tendons repaired immediately.

After one week of recovery, string-pulling behavior was recorded for four more weeks. The team used a high-definition (HD) video camera, fixed using a tripod and positioned 20 cm away from the front pane of plexiglass, to record three videos of each mouse performing a discrete bout of string pulling.

In addition, the researchers developed video-based biomarkers of shoulder function. They validated this concordance in human patients with RC or MSK pathology diagnosed using magnetic resonance imaging (MRI) scans.

The researchers built two DeepLabCut (DLC) v2.2.0 models, one for a pilot cohort of three mice and another for all 13 mice in the experiment, with labeled hand locations for 320 and 1080 video frames, respectively.

The amplitude and time for reach and pull epochs were calculated based on the Y-axis kinematics trajectory. Next, they calculated the full width at half maximum (FWHM), representing movement fluency during reaching and pulling.

Human patients for this study comprised those with RC injuries and controls. The team recorded their string-pulling task using a smartphone camera and used the DLC models to process it.

The peaks/troughs of string-pulling amplitude and time were extracted and analyzed similarly to the rodent data, where the Y-axis kinematic trajectory for the hands was highpass- and lowpass-filtered at 0.1 Hz and 7 Hz, respectively. For other analyses, e.g., FWHM, the X/Y kinematic trajectories for the hands and elbows were only lowpass-filtered.

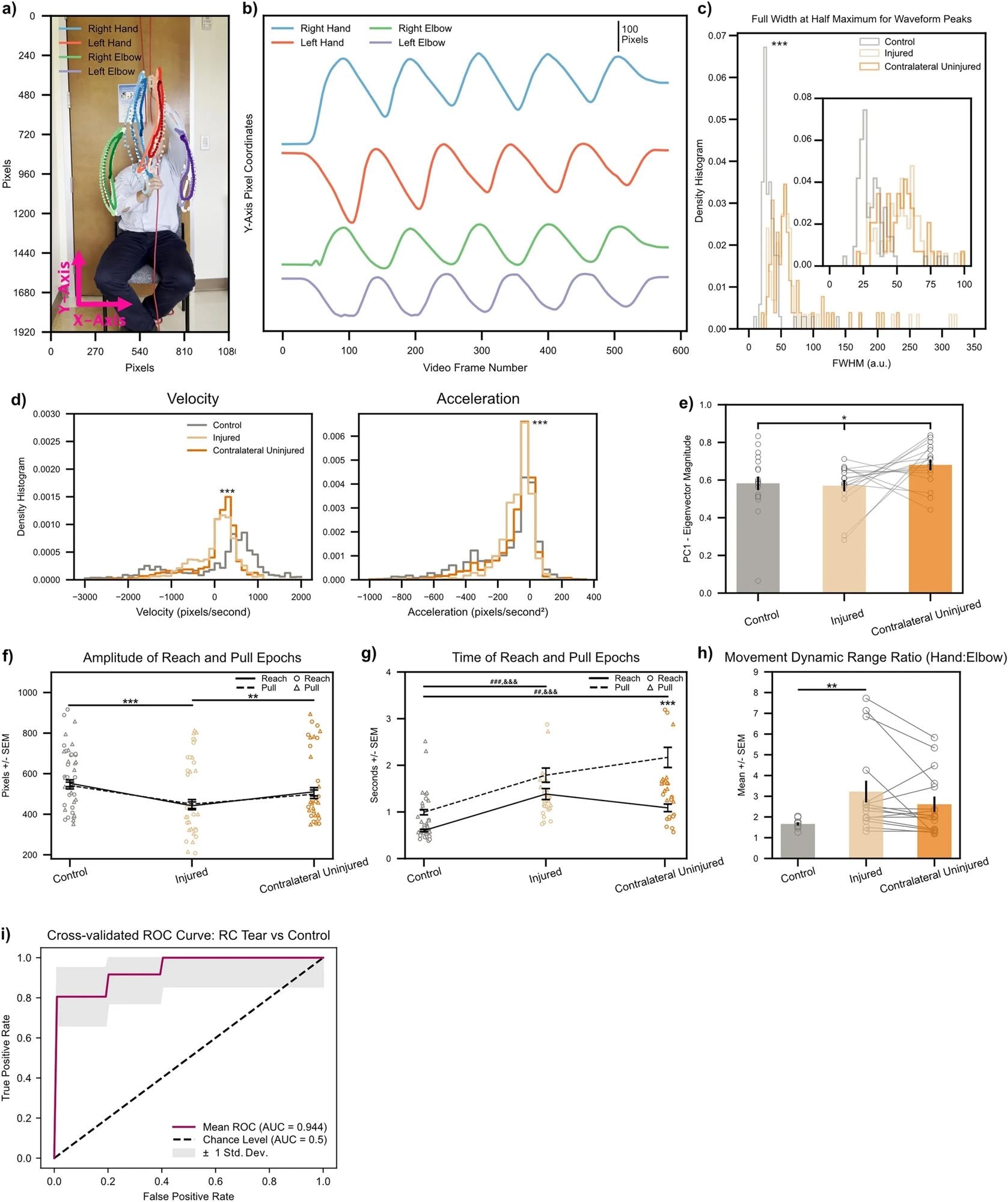

Biomarkers calculated on string pulling kinematic traces directly translate to human subjects with shoulder injury. (a) Representative control subject with three cycles of string pulling superimposed. Elbows were labeled in addition to hands for the human patients given the ready visibility of human elbows. (b) Same subject as in (a), data shown for one full trial. Note similarity of kinematic trajectories for the hands between human and rodent subjects (Fig. 2b). (c) FWHM measurements for control (n = 12) and injured shoulders (n = 6). Inset shows zoomed view for FWHM values between 0 to 100. *** < 0.001, Kolmogorov-Smirnoff test. (d) Left, histogram of velocity values (calculated on the Y-axis kinematic trajectories of the hands) for control (n = 6), injured (n = 6), and contralateral uninjured (n = 6) shoulders. Right, same as left only for acceleration values. ***< 0.001, Kruskal–Wallis test. (e) Quantification of absolute Eigenvector weights for the first PC (data shown for Y-axis Eigenvector weights of the hands). Gray lines show change between injured and contralateral uninjured shoulders for each trial recorded per subject. *< 0.1, one-way ANOVA (f, g) Amplitude and time of reach (solid line) and pull (dashed line) epochs. Individual amplitude/time values for every cycle of string pulling shown for reaches and pulls with circles and triangles, respectively. (h) Ratio of standard deviation values between ipsilateral hand:elbow pairs calculated for each subject on their hand/elbow Y-axis kinematic trajectories. Gray lines show change between injured and contralateral uninjured shoulders for each trial recorded per subject. *< 0.05; **< 0.01; ***< 0.001, two-way ANOVAs, Tukey multiple comparison corrected post-hocs for all other statistical analyses unless stated otherwise. (i) Receiver operating characteristic (ROC) curve for a binary logistic regression model fitted to predict a patient as either having no RC tear or having an RC tear in one of their shoulders. Maroon line shows mean ROC across threefold stratified cross-validation, gray outline plots ± 1 Std. Dev. uncertainty in the mean ROC estimate.

Biomarkers calculated on string pulling kinematic traces directly translate to human subjects with shoulder injury. (a) Representative control subject with three cycles of string pulling superimposed. Elbows were labeled in addition to hands for the human patients given the ready visibility of human elbows. (b) Same subject as in (a), data shown for one full trial. Note similarity of kinematic trajectories for the hands between human and rodent subjects (Fig. 2b). (c) FWHM measurements for control (n = 12) and injured shoulders (n = 6). Inset shows zoomed view for FWHM values between 0 to 100. *** < 0.001, Kolmogorov-Smirnoff test. (d) Left, histogram of velocity values (calculated on the Y-axis kinematic trajectories of the hands) for control (n = 6), injured (n = 6), and contralateral uninjured (n = 6) shoulders. Right, same as left only for acceleration values. ***< 0.001, Kruskal–Wallis test. (e) Quantification of absolute Eigenvector weights for the first PC (data shown for Y-axis Eigenvector weights of the hands). Gray lines show change between injured and contralateral uninjured shoulders for each trial recorded per subject. *< 0.1, one-way ANOVA (f, g) Amplitude and time of reach (solid line) and pull (dashed line) epochs. Individual amplitude/time values for every cycle of string pulling shown for reaches and pulls with circles and triangles, respectively. (h) Ratio of standard deviation values between ipsilateral hand:elbow pairs calculated for each subject on their hand/elbow Y-axis kinematic trajectories. Gray lines show change between injured and contralateral uninjured shoulders for each trial recorded per subject. *< 0.05; **< 0.01; ***< 0.001, two-way ANOVAs, Tukey multiple comparison corrected post-hocs for all other statistical analyses unless stated otherwise. (i) Receiver operating characteristic (ROC) curve for a binary logistic regression model fitted to predict a patient as either having no RC tear or having an RC tear in one of their shoulders. Maroon line shows mean ROC across threefold stratified cross-validation, gray outline plots ± 1 Std. Dev. uncertainty in the mean ROC estimate.

Results

Mice and humans with RC tears exhibited decreased movement amplitude, prolonged movement time, and some quantitative changes in waveform shape during the string-pulling task.

In rodents, injury additionally caused degradation of low-dimensional, temporally coordinated movements after injury. Furthermore, the DLC model built on biomarker ensemble very well classified human patients as having an RC tear with >90% accuracy.

Intriguingly, only two principal components are sufficient to explain >90% of the variance in the data for human string-pulling behavior, suggesting that the biomechanical and neural representations of the hand are lower dimensional and string-pulling behavior also manifests itself as a low-dimensional activity.

The results showed no statistically significant differences in the dimensionality of string-pulling behavior in humans with or without RC tears.

Conclusions

Overall, this study's findings pave the way for future development of inexpensive, smartphone-based, at-home diagnostic tests for shoulder injury.

In the future, this technology, which combines a framework bridging animal model, motion capture, convolutional neural networks, and ML-based assessment of movement quality, might help track the recovery of kinematics after a shoulder injury or surgery.

They could even potentially serve as a screening test for shoulder pathology after adequate comparison with currently available diagnostic methods.