A large NHS screening study shows that artificial intelligence can detect subtle signals in “normal” mammograms that reveal which women are most likely to develop aggressive interval cancers years before they appear.

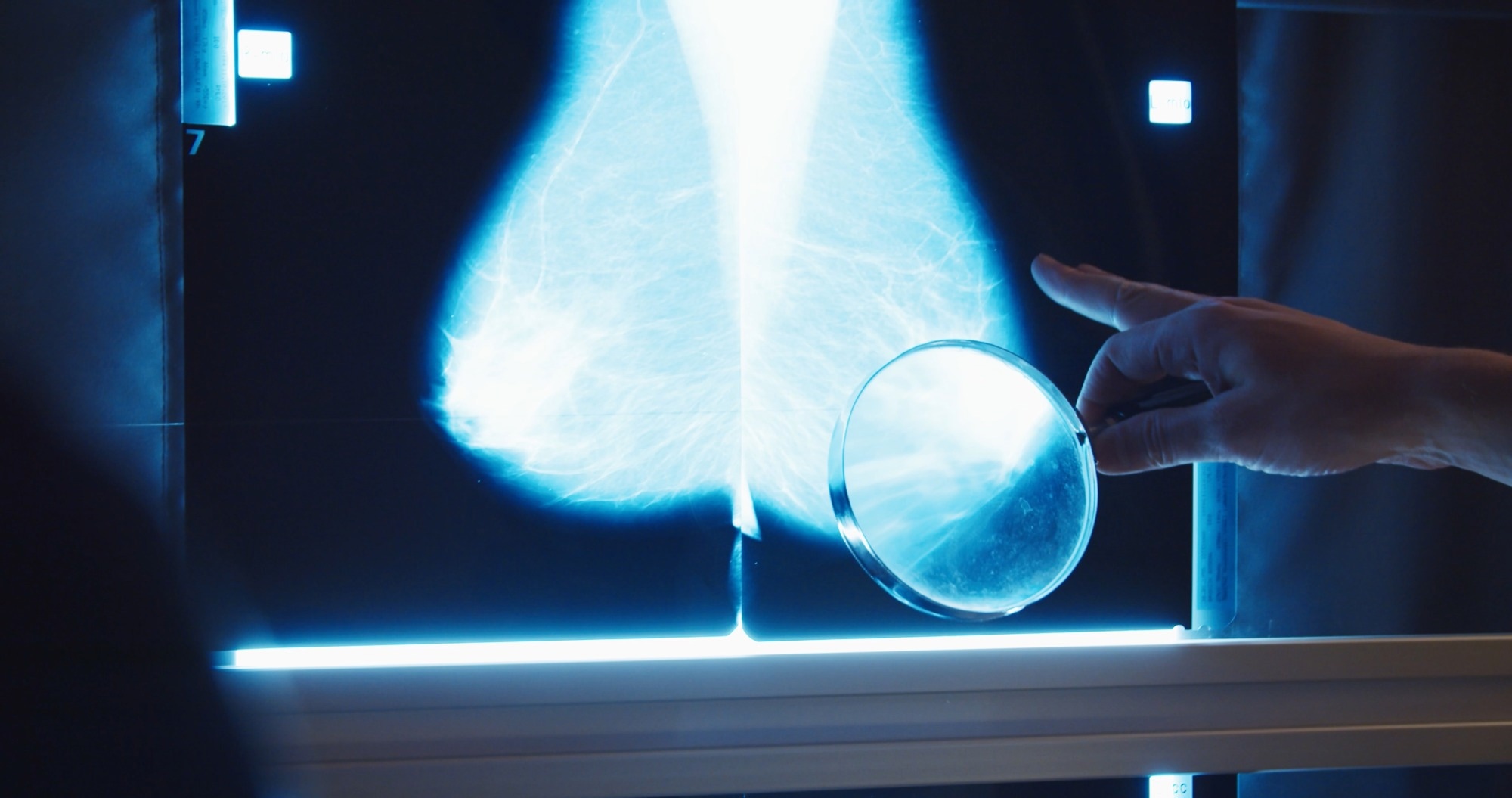

Study: Performance of breast cancer risk prediction algorithms across mammography systems in the UK screening programme. Image Credit: CameraCraft / Shutterstock

In a recent study published in the journal npj Digital Medicine, researchers conducted a large-scale (n = 112,621) retrospective validation study to evaluate the performance of four state-of-the-art Deep Learning (DL) algorithms for predicting “interval cancers”. These cancers account for approximately 30% of cancers diagnosed after a negative screening mammogram but before the next scheduled screening examination in screening programs and represent a critical diagnostic gap in current mammogram-based screening approaches.

The study's findings revealed the top-performing algorithm in this four-model comparison (interval cancer AUC = 0.77). This top-performing algorithm identified about 27.5% of interval cancers in the study cohort by flagging the top 4% of “normal” (negative) screening mammogram images as the highest risk.

While the study noted that model performance varied slightly across the specific machines used to produce mammogram images and that one algorithm showed statistically significant differences between systems, these findings suggest that DL tools could potentially support risk-stratified breast cancer screening strategies, although prospective clinical evaluation would be required before implementation.

Background: The Challenge of Interval Breast Cancers

For decades, breast cancer screening recommendations have involved women receiving a mammogram once every few years (e.g., every 3 years in the United Kingdom [UK]). However, a growing body of evidence suggests that while these periodic screenings are necessary and effective at detecting most breast cancers, they fail to identify “interval cancers”, cancers diagnosed after a negative screening mammogram but before the next scheduled screening.

These “hidden” cancers, which are observed to develop or become clinically apparent in the periods between screening schedules, are often significantly more aggressive than those detected in routine mammograms, leading to worse prognosis and clinical outcomes, including death.

Traditional approaches to addressing interval cancers have involved clinicians attempting to predict individual risk via genetic assessments (such as polygenic risk scores, which are not routinely implemented in most population screening programs) and family history evaluations (often incomplete).

However, recent advances in Deep Learning (DL) algorithms have led researchers to hypothesize that these Artificial Intelligence (AI) models, trained on millions of mammogram images, may be able to recognize subtle imaging patterns and tissue characteristics in breast tissue that human radiologists might overlook.

Unfortunately, given the wealth of commercial and academic DL models currently available, clinicians do not yet know which model to choose and whether these tools can perform well enough to be included in personalized care.

Study Objective and Model Comparison

The present study aimed to address this knowledge gap by conducting a head-to-head comparison of the breast cancer predictive performance of four of today’s most advanced DL models: Mirai (MIT), iCAD ProFound AI Risk (a commercially available model), Transpara Risk (another commercially available DL tool), and Google Health’s Risk Model.

Validation Dataset From the UK NHS Screening Program

These models were provided with an extensive retrospective validation dataset from the UK’s National Health Service (NHS). The dataset comprised high-resolution “negative” (cancer-free) screening mammograms (n = 112,621) collected between 2014 and 2017 from two distinct NHS screening sites.

Model performance was validated by tracking participants for five years to observe which women eventually developed breast cancers (approximately 1,225 cancers across the follow-up period), including interval cancers.

Evaluation Across Mammography Hardware Platforms

To evaluate the generalizability of algorithm performance across different mammography hardware platforms, DL models were trained on mammography images from different hardware ecosystems, specifically machines from Philips and GE.

Predictive Performance of Deep Learning Models

The study findings revealed that the academic algorithm Mirai consistently demonstrated the highest predictive power (Area Under the Curve [AUC] = 0.72; p < 0.001). While iCAD (AUC = 0.70), Google (AUC = 0.68), and Transpara (AUC = 0.65) achieved lower scores, their predictive performance was still notable given that the input mammograms had previously been interpreted as “normal” during routine screening.

Identification of High-Risk Patients for Interval Cancers

Study observations indicated that these models could identify future interval cancers from screening examinations initially interpreted as negative (Mirai’s interval cancer AUC = 0.77). When researchers tested the top 4% of women identified by Mirai as being “highest risk,” about 27.5% of all interval cancers in the cohort occurred within this high-risk group during follow-up.

Expanding this high-risk group to the top 14% of women was observed to double the interval cancer detection yield, capturing approximately 50.3% of all future interval cancers in the cohort.

Performance Across Mammography Machine Manufacturers

The study also evaluated whether algorithm performance differed across mammography machine manufacturers. Researchers found that three of the four evaluated models performed statistically similarly on images generated by Philips and GE machines. While the Transpara model performed better on images generated by GE machines than on those generated by Philips machines, the difference was relatively modest (AUC = 0.69 versus 0.62).

The researchers also highlight several limitations, including the exclusion of mammograms with implants or non-standard imaging views, incomplete ethnicity data, and the possibility that results may not fully generalize to mammography systems from other major vendors. The authors also note that retrospective validation may underestimate the potential clinical utility, since some cancers might be detected through additional imaging pathways rather than solely through symptomatic presentation.

Conclusions: Toward Risk-Stratified Breast Cancer Screening

The present study provides evidence suggesting that DL models can identify previously unrecognized imaging signals from standard mammograms to predict future cancer risk. Models such as MIT’s Mirai were shown to identify and flag a significant proportion of interval cancers in a small group of high-risk women.

Future work should aim to investigate these results in prospective clinical trials and real-world screening settings before such tools can be integrated into personalized screening protocols.