A new study found that realistic AI-generated X-rays were not easily distinguished from authentic scans by either radiologists or top multimodal models, highlighting a growing risk of deepfakes in clinical imaging.

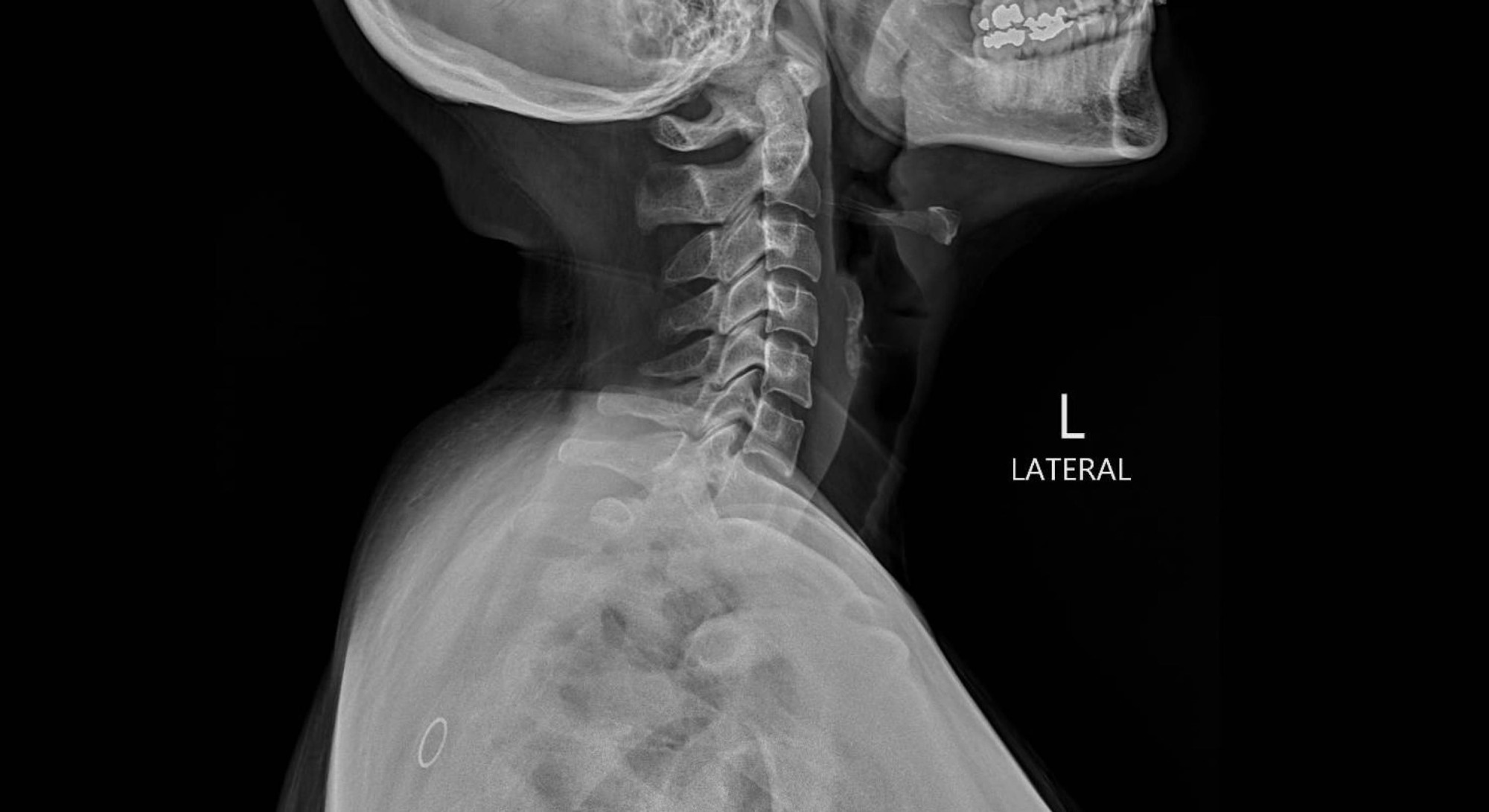

Study: The Rise of Deepfake Medical Imaging: Radiologists’ Diagnostic Accuracy in Detecting ChatGPT-generated Radiographs. Image Credit: Peter Porrini / Shutterstock

A recent study published in the journal Radiology investigated radiologists' ability to differentiate between artificial intelligence (AI)-generated radiographs and authentic clinical images.

Generative AI has evolved over the past decade from generative adversarial networks (GANs) to diffusion-based models capable of producing photorealistic images. Unlike specialized GAN pipelines, large language models (LLMs), such as Chat Generative Pretrained Transformer (ChatGPT)-4o (GPT-4o) and GPT-5, can generate anatomically plausible radiographs from plain-language prompts, lowering the technical barrier to fabricate medical images and raising concerns about misuse.

Radiologist and LLM Image Classification Study Design

In the present study, researchers evaluated the ability of LLMs and radiologists to differentiate AI-generated synthetic radiographs from real clinical images. They recruited 17 radiologists from 12 centers across six countries: France, Germany, the United Arab Emirates, the United States, the United Kingdom, and Turkey. These included trainee residents, early-career staff, and more experienced individuals, with up to 40 years of experience.

Radiologists represented the following sub-specialties: musculoskeletal imaging, thoracic imaging, nuclear medicine, interventional radiology, general radiology, and body imaging. Radiologists were assessed on two distinct sets of images.

Dataset 1 comprised 77 real radiographs and 77 synthetic images generated by GPT-4o. Synthetic images included radiographs of the chest, extremities, and spine. Real images were obtained from a local database and publicly available datasets.

Dataset 2 comprised 55 authentic chest radiographs and 55 synthetic chest radiographs generated by an organ-specific diffusion model, RoentGen. In phase 1 of the study, radiologists, blinded to the study purpose, assessed the technical quality of dataset 1 on a Likert scale. In phase 2, they were informed that some images in dataset 1 were AI-generated and asked to classify them as AI-generated or authentic, and to rate their confidence.

Additionally, radiologists were asked to report on the most common clues that distinguish AI-generated from authentic images. In phase 3, radiologists classified images in dataset 2 as real or AI-generated. Further, four LLMs, GPT-4o, GPT-5, Llama 4 Maverick, and Gemini 2.5 Pro, were similarly tested with dataset images.

LLMs were prompted to ascertain whether a given radiograph was authentic or AI-generated and provide a brief justification. The primary endpoint of the study was accuracy in phases 2 and 3 per reader. Secondary endpoints included pooled specificity, sensitivity, positive predictive value, negative predictive value, image quality, diagnostic accuracy, interreader agreement, and mean confidence scores.

Radiograph Image Quality and Diagnostic Performance Results

The average Likert score for the image quality of dataset 1 radiographs was 3.7. The mean score was 3.8 for authentic radiographs and 3.6 for AI-generated images. Artifacts were observed in 5.5% of authentic radiographs and 15.4% of AI-generated images.

Notably, seven radiologists, while blinded to the study’s goal, reported the presence of AI-generated radiographs. The diagnostic accuracy of radiologists for the abnormalities shown on the radiographs was 91.3% for authentic radiographs and 92.4% for AI-generated images.

Radiologists' Accuracy in Detecting AI Images

In phase 2, radiologists achieved 74.8% accuracy in detecting AI-generated images. The pooled sensitivity and specificity were 69.1% and 80.4%, respectively. No difference in confidence level was observed among radiologists. Although 10 readers were familiar with AI-generated medical images, 13 were unaware that ChatGPT could generate realistic radiographs. Musculoskeletal radiologists performed better than the other radiologists in this phase, and overall interreader agreement was fair.

Uniform noise or grain, a subtly unnatural soft-tissue texture, symmetric vertebral alignment, overly smooth bones, altered bone shape, and the absence of normal anatomical irregularities were reported by radiologists as some of the most distinctive features of AI-generated radiographs. Fracture lines were reported to be unusually clean, consistent, and smooth in AI-generated radiographs.

Chest Radiograph Classification and LLM Performance

Radiologists' accuracy in differentiating authentic chest radiographs from RoentGen-generated chest radiographs was 70%. Accuracy was slightly greater among more experienced readers, but there was no evidence of a linear association between years of experience and accuracy.

Further, GPT-4o and GPT-5 achieved accuracies of 85.1% and 82.5% for GPT-4o-generated images and 75.5% and 89.1% for RoentGen-generated radiographs, respectively.

Llama 4 Maverick and Gemini 2.5 Pro had substantially worse performance. There was no difference in accuracy between Llama 4 Maverick and Gemini 2.5 Pro for the GPT-4o-generated dataset. LLMs reported overly uniform bone details, marker-related artifacts, unnaturally sharp surgical material, and smoothed texture without granular variation as the common features of AI-generated images.

The study also had important limitations: both datasets were artificially balanced between real and synthetic images, four obvious GPT-generated failures were excluded from dataset 1, and GPT-4o served both as the image generator and as one of the tested detectors.

The authors also noted that real-world detection could be harder because synthetic images would likely be less common outside this test setting, thereby likely lowering reader sensitivity.

Implications for Deepfake Medical Imaging Risks

In sum, the moderate performance of radiologists and LLMs in identifying synthetic radiographs, along with the public availability of LLMs, underscores the potential for malicious use. As such, a multi-layered response involving clinician education, mandatory watermarking, and automated deepfake detection is needed to prevent this novelty from becoming a systemic threat.

Journal reference:

- Tordjman M, Yuce M, Ammar A, et al. (2026). The Rise of Deepfake Medical Imaging: Radiologists’ Diagnostic Accuracy in Detecting ChatGPT-generated Radiographs. Radiology, 318(3), e252094. DOI: 10.1148/radiol.252094, https://pubs.rsna.org/doi/10.1148/radiol.252094